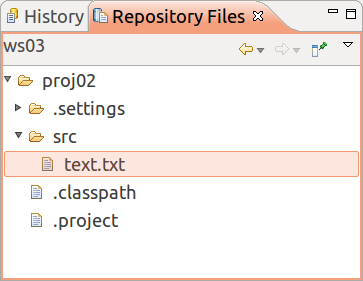

Software Configuration and Management Using Visual SourceSafe and VS.NET. As a best practice, your SCM should contain all project artifacts in the SCM library for easy access, sharing, and version control. Solution files (*.sln). In our case, it is not dramatic, that a binary file needs to be locked during the processing by a user. The important question is, which scm handles best with the memory space for binaries. Svn uses the xdelta differencing algorithm.

We're implementing Ansible Tower and trying to put as much as possible in the playbook git repositories. Coming from Puppet even thought we knew it was a bad idea we several put some large binary installers (130MB) in the git repo and it caused the r10k updates to take longer than desired. Going forward we're wondering if there is a best practice for distributing large files to a large number of nodes. We're planning for around 1000 nodes. We see three options:. Putting them in the repo and using the copy module with a relative path to distribute from the Ansible Tower server.

This would mean that when we push out a new agent or updated installer we would be transferring (file size). (node count) amount of data. SCM Updates would take a bit, especially if they're done before every run. In the example above that would be 130GB distributed from Ansible Tower. Put them in an static directory on the Ansible Tower server and use the copy module with the full path to distribute from the Ansible Tower server.

This would have the same data transfer requirements as above but would remove the files from the repo. Put the files on a central webserver and perform all the transfers using the geturl module. This would remove the load from the Ansible Tower server and allow for a scaling or load balanced architecture for large file transfers. Could even get creative with a S3 website or something to eliminate the need for managing a webserver.

How are other people solving this problem? Putting it on the Ansible server and just forgetting about it and letting Ansible handle the actual data transfer? Personally we're leaning toward #3 but have not been able to find anyone else doing this online. We want to make sure we're not implementing an anti-pattern. Any feedback would be appreciated. How distributed the 1000 nodes are and what sort of bandwidth you're dealing with is a big factor here.

If you have clumps of nodes in datacenters or somewhat geographically close, building out pseudo CDN can be a big help (similar to what RH Satellite does for RPMS). For non-RPM content, a binary artifact repo such as nexus is a good choice. The mavenartifact is like the geturl module and brings nexus api + hash checking to the party. Nexus can also have caching proxies so push to main server and as each proxy is hit, the artifact is cached for that datacenter / region. You could accomplish something similar with a simple web server and squid proxies. I've personally served up 500 simultaneous downloads out of a highly tuned nexus instance over a 40gig pipe to servers in the same Datacenter.